Most AI initiatives don’t fail. They get quietly deprioritized.

Budgets are approved, pilots show results, and teams report improved accuracy and efficiency. Yet when it’s time to scale, executive enthusiasm cools. AI initiatives lose momentum when leadership lacks visibility into measurable business value.

As organizations increase AI investments, executive teams increasingly expect measurable ROI tied directly to strategic business objectives rather than isolated technical wins.

This is the moment where AI stops being a strategic investment and starts being viewed as an experiment unless ROI is measured in a way executives recognize and trust. Recently, AI became a boardroom topic. CEOs, CFOs, and executive leadership teams are actively funding AI initiatives, but they are also asking harder questions than ever before.

The most common question is simple but decisive: How do we know AI is actually delivering business value?

AI promises faster decisions, operational efficiency, better customer experiences, and competitive advantages. The rapid rise of generative AI has further accelerated executive interest, pushing organizations to evaluate how intelligent systems contribute to measurable business outcomes. However, without a clear way to measure return on investment, AI initiatives risk being perceived as experiments rather than strategic enablers. This perception often determines whether AI projects scale across the enterprise or stall after a pilot phase.

This is where AI ROI fundamentally changes the conversation. Measuring AI ROI translates artificial intelligence from technical capability into business outcomes that executives understand, trust, and are willing to fund long term.

In this blog, we explore how Enterprises can measure AI ROI effectively, which KPIs matter most to executive leadership, and how outcome-driven dashboards play a critical role in accelerating AI adoption at scale.

Why Measuring AI ROI Matters

As AI adoption matures, leadership expectations evolve with it. Executives are no longer impressed by proof-of-concept success or isolated efficiency improvements. They want clarity on how AI contributes to margins, growth, resilience, and long-term competitiveness. Measuring AI ROI matters because it answers those questions in a language leadership already uses to evaluate every other investment.

AI ROI also creates alignment across the organization. Without a shared measurement framework, AI teams optimize technical success while executives evaluate business impact. This disconnect creates friction, misinterpretation, and skepticism. ROI bridges that gap by establishing common success criteria that both technical and business stakeholders can rely on.

For many business leaders, AI measurement frameworks provide the starting point for evaluating whether enterprise AI adoption is contributing to long-term growth, operational resilience, and sustainable business value.

Most importantly, ROI measurement influences decision velocity. When leadership has confidence in how value is measured, decisions to scale, optimize, or sunset AI initiatives happen faster. Organizations that measure AI ROI consistently do not just deploy more AI; they deploy it more effectively.

Executives Invest in Outcomes, Not Algorithms

Executives rarely question whether an AI model is sophisticated enough. What they question is whether it materially changes the business. A model can outperform benchmarks and still fail to earn executive support if its impact on cost, revenue, or risk is unclear. From a leadership perspective, relevance always outweighs technical novelty.

Executives evaluating AI projects increasingly prioritize tangible operational outcomes over technical sophistication, especially when assessing large-scale AI implementation initiatives.

This disconnect becomes visible in executive reviews. AI teams often present accuracy, precision, or latency metrics, expecting those numbers to speak for themselves. Instead, executives ask follow-up questions: What decisions changed because of this? What costs were removed? What risks are now lower? If those answers are not clear, confidence erodes quickly.

AI ROI reframes the conversation. It shifts focus from how well a model performs to what the organization can now do differently. When outcomes are explicit, executives stop debating the technology and start discussing expansion.

How AI ROI Aligns AI with Business Strategy

AI ROI creates strategic discipline. When ROI expectations are defined early, AI initiatives are no longer driven by curiosity or tool availability, but by business constraints. Teams begin with problems that are expensive, slow, or risky, and then evaluate whether AI can meaningfully improve them.

This alignment improves prioritization. Not every AI use case deserves enterprise investment, even if it is technically interesting. ROI frameworks help leadership distinguish between initiatives that deliver tactical efficiency and those that unlock strategic leverage.

Over time, this clarity changes how AI is perceived. Instead of a collection of disconnected projects, AI becomes a portfolio of investments with varying risk and return profiles. This perspective allows executives to allocate capital with confidence and intent.

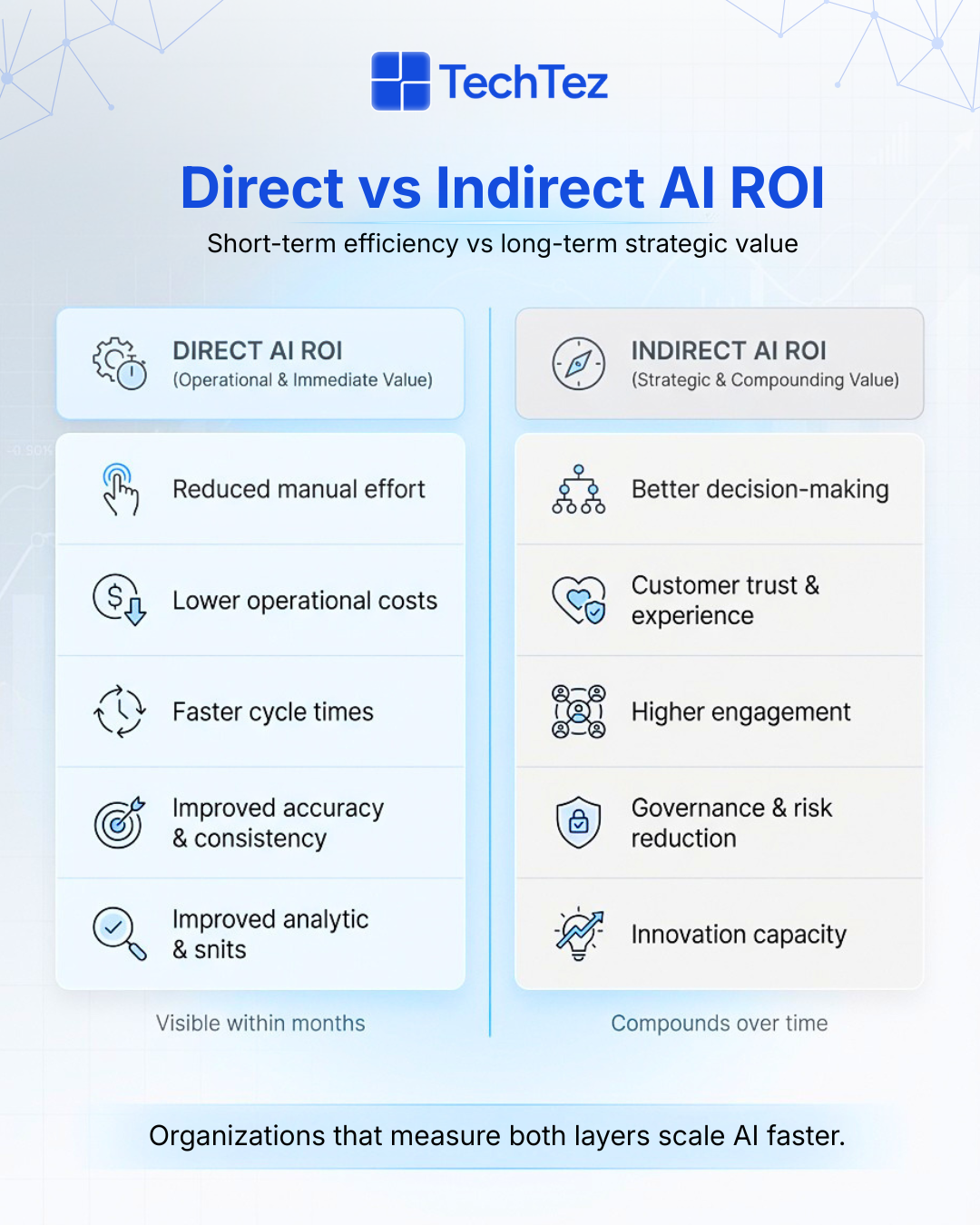

AI ROI appears in two distinct but complementary layers.

Forward-looking organizations recognize that indirect ROI often compounds over time and delivers significant long-term value.

💡 Executive Insight : AI initiatives with well-defined ROI metrics have a much higher chance of moving from proof of concept to production. According to a TechRadar study, clarity around value is a key driver in scaling AI projects successfully.

The Hidden Cost of Not Measuring AI ROI

The absence of AI ROI rarely results in visible failure. Instead, it leads to gradual erosion. AI initiatives continue to operate, but leadership engagement weakens, funding becomes cautious, and strategic relevance fades. Over time, AI becomes something the organization has rather than something it actively scales.

This erosion creates fragmentation. Teams deploy AI solutions independently, optimizing local efficiency without enterprise alignment. Governance becomes inconsistent; data practices diverge, and duplication of effort increases. None of this appears immediately on a balance sheet, but it quietly increases operational and compliance risk.

The greatest cost is missed opportunity. AI that could have scaled and compounded value remains underutilized simply because its impact was never clearly articulated. In many organizations, AI does not fail; it stalls.

Before calculating ROI, consider the cost of not measuring it:

- Unclear Value: AI pilots lack success criteria

- Budget Resistance: Leadership hesitates to fund scaling

- Shadow AI: Teams deploy AI tools without governance

- Missed Opportunities: High-impact use cases fail to expand

- Strategic Drift: AI initiatives don’t align with business priorities

How AI Pilots Lose Momentum Without ROI

AI pilots often deliver real operational improvements. Processes run faster, errors decline, and teams report better insights. However, without defined ROI metrics, these improvements remain anecdotal. When pilots reach executive review, results are described qualitatively rather than defensibly.

This makes scaling decisions difficult. Leadership hesitates to approve additional funding, not because the pilot underperformed, but because the business case lacks rigor. As a result, pilots linger in a gray zone neither scaled nor shut down. Over time, ownership fades and momentum is lost. Clear ROI criteria give pilots a trajectory. They turn early success into a credible case for enterprise rollout.

Governance and Budget Risks of Unmeasured AI

When AI ROI is unclear, governance weakens first. Teams make independent decisions about tools, vendors, and models, often without a shared understanding of value. This leads to inconsistent controls, fragmented architectures, and rising compliance exposure.

Budgeting becomes more reactive rather than strategic. Funding decisions are influenced by advocacy instead of evidence, favoring teams that communicate well over those that deliver the most impact. This dynamic undermines trust and slows organizational learning.

ROI measurement restores discipline. It anchors governance and budget decisions in transparent, comparable outcomes, allowing leadership to manage AI as a coordinated capability rather than a collection of experiments.

How to Calculate AI ROI

At its foundation, AI ROI follows a familiar business formula. The return on investment is calculated by comparing the annual benefits generated by AI against the total cost of building, deploying, and maintaining AI systems.

However, the challenge lies not in the formula itself, but in identifying and quantifying the right benefits and costs.

This is especially important for generative AI initiatives, where productivity improvements, decision support, and knowledge automation can create both direct and indirect business value.

AI ROI follows a simple formula:

AI ROI (%) = (Annual AI Benefits – AI Costs) ÷ AI Costs × 100 At its core, AI ROI is grounded in a familiar business concept: comparing benefits to costs. However, applying this concept to AI requires thoughtful modeling and cross-functional alignment.

Effective AI ROI frameworks treat measurement as an ongoing process rather than a one-time calculation performed at project approval.

What Counts as AI Benefits

AI benefits rarely appear as a single metric. Early gains often show efficiency improvements, but their true value emerges when those improvements influence decisions or resource allocation. Faster forecasts matter only if they change planning behavior.

Strong ROI frameworks capture these causal chains. They link operational improvements to financial and strategic outcomes, ensuring that AI value is neither overstated nor overlooked.

Over time, indirect benefits such as better decision quality and increased organizational agility often surpass direct gains. Mature organizations recognize and track this compounding effect.

AI benefits often appear across multiple dimensions:

- Time savings through automation or AI-assisted workflows

- Cost reductions from fewer errors and rework

- Revenue uplift from improved recommendations, pricing, or targeting

- Risk reduction through predictive analytics

- Productivity gains across teams

As AI adoption deepens, these benefits often compound, delivering increasing returns over time.

What Counts as AI Costs

AI costs extend far beyond initial development. Infrastructure, data pipelines, cloud consumption, integration, monitoring, governance, and change management all contribute to total cost of ownership.

Underestimating these costs may inflate short-term ROI but damages credibility over time. Executives prefer conservative assumptions that hold up under scrutiny.

Transparent cost modeling builds trust. It signals maturity and supports sustainable investment decisions.

AI costs extend well beyond model development:

- Data infrastructure and cloud consumption

- AI platforms and tooling

- Engineering and system integration

- Training and change management

- Ongoing monitoring, governance, and maintenance

Mature ROI models also incorporate opportunity gains, such as faster time-to-market or improved decision velocity.

KPIs That Matter for Measuring AI ROI

Executives do not want more metrics. They want clearer signals. KPIs matter only if they influence decisions about scaling, optimization, or exit.

Operational KPIs show whether AI changes how work is done. Financial KPIs translate those changes into economic impact. Strategic KPIs justify long-term investment by showing improvements in decision quality, resilience, and customer trust.

A disciplined KPI framework prioritizes relevance over volume. Measuring less, but measuring well, builds confidence and accelerates action.

Operational KPIs

- Operational KPIs demonstrate how AI improves efficiency:

- Cycle time reduction

- Error rate reduction

- Automation coverage

- Cost per transaction

Financial KPIs

- Financial KPIs translate AI outcomes into financial terms:

- Cost savings

- Revenue uplift

- Margin improvement

- Payback period

Strategic KPIs

- Strategic KPIs reflect long-term value:

- Decision accuracy

- Forecast reliability

- Customer satisfaction (CSAT / NPS)

- Risk reduction

💡 Rule of Thumb: If a KPI doesn’t influence executive decisions, it doesn’t belong in an AI ROI dashboard.

Building AI ROI Dashboards for Executive Buy-In

Dashboards are where AI value becomes visible or disappears. Executives do not use dashboards to analyze models; they rely on continuous monitoring and outcome-driven dashboards to orient decisions quickly. Effective dashboards lead to outcomes, not technical detail. They show trends, highlight exceptions, and make assumptions transparent. When dashboards consistently answer executive questions without explanation, AI initiatives gain momentum.

What Executives Expect to See

- Executives want dashboards that provide:

- Clear ROI summaries and trends

- Before-and-after comparisons

- Direct links between AI initiatives and business KPIs

- Transparent assumptions and risks

- Signals of scalability and sustainability

Dashboard Best Practices

- High-performing AI ROI dashboards:

- Lead with outcomes, not model metrics

- Emphasize trends over static snapshots

- Align KPIs with strategic priorities

- Use consistent definitions across teams

This approach aligns with guidance from organizations such as McKinsey & Company and Gartner, which emphasize outcome-driven AI measurement over technical reporting.

AI ROI Across Business Functions

AI ROI becomes tangible when mapped to business functions. Finance, sales, operations, HR, and customer support each experience value differently. Function-level visibility allows leadership to see where AI delivers the greatest impact and where returns are marginal. This perspective supports smarter portfolio decisions and prevents over-investment in low leverage use cases.

What often accelerates executive adoption is not a single enterprise-wide metric, but a collection of smaller, credible wins across departments. When each function reports measurable outcomes tied to its core KPIs, leadership can see how AI contributes to overall performance rather than isolated efficiency gains. Over time, these localized improvements compound into enterprise-level impact.

AI also changes how functions collaborate. For example, improved forecasting in finance influences procurement and operations planning. Sales predictions affect staffing and inventory decisions. Customer support insights inform product and marketing strategy. Measuring ROI at the functional level makes these interdependencies visible, helping executives understand AI as an organizational capability rather than a departmental tool.

Another important benefit of function-based ROI tracking is accountability. Each department owns its outcomes, making success easier to attribute and replicate. Instead of debating abstract enterprise benefits, leaders can identify which workflows improved, why they improved, and how similar approaches can scale elsewhere.

Function-Level AI ROI Examples

- Finance: Forecasting, fraud detection → reduced losses, improved cash flow

- Sales: Lead scoring, pricing optimization → higher conversion and revenue growth

- Operations: Demand forecasting → improved efficiency and resilience

- HR: Attrition prediction → lower churn, better hiring outcomes

- Customer Support: AI agents → faster resolution, higher customer satisfaction

Mapping ROI to core functions reinforces AI’s role as a business enabler.

Platforms such as UiPath, Celonis, and Automation Anywhere are increasingly embedding ROI analytics directly into AI workflows.

💡 Key Insight: AI ROI is clearest when mapped to business-critical KPIs not isolated technical benchmarks. SAP outlines this approach in its guidance on maximizing AI ROI.

Final Thoughts: AI ROI Builds Executive Trust

The real risk with AI isn’t over-investing, it’s failing to prove value. AI initiatives rarely collapse because models perform poorly. They lose momentum when leadership cannot connect technical progress to business progress. Executive confidence grows when AI moves from isolated success stories to predictable performance indicators that behave like any other investment. ROI provides predictability.

When organizations consistently measure AI impact, decision-making changes. Funding discussions become faster, governance becomes clearer, and prioritization shifts from intuition to evidence.

Ultimately, AI maturity is not defined by how many models an organization deploys, but by how confident leadership can invest in them. Clear ROI transforms AI from a promising innovation into a dependable operating advantage, one that executives are willing to scale, budget for, and embed into long-term strategy.

At TechTez, we help organizations move beyond experimental AI metrics and focus on outcome-driven ROI frameworks. To integrate AI into your workflows but are unsure where to start or how to prioritize high-impact use cases, reach out to us at contact@techtez.com to begin building a practical, outcome-driven AI roadmap.